coding machine learning, math, some other stuff - nov/dec 2020

Shoving two months into one so it makes it look like I've done more than I have (also laziness)

Hi everyone, good on you and me for getting through this sinking ship [Earth]. Thanks for taking the time to read this rambling, and I’ll try to keep it short this time, promise.

If you want more of whatever this is received every two months in haphazard fashion, you’d love subscribing to this blog. seriously, do it, please

Things aren’t actually that bad. Here are a few thingies that I’ve done over the past couple months. In somewhat-sort-of chronological order.

Also, although I may be maintaining some sort of distanced aloofness that comes with my *casual* “writing”, the stuff that I’m trying to comprehend is truly beautiful and fascinating, and I admire to the greatest amounts the people on the forefront of scientific discovery in these fields. Okay, time to put those dark, mirrored shades of aloofness back on.

Learning PyTorch

PyTorch has been gaining exponential (well mathematically, I’m not sure if it’s exponential. People always say exponential when it usually is not) popularity, especially in the scientific research community, so I decided to go with that.

I completed my first algorithm (Univariate Linear Regression! Barely even machine learning but hey I’m counting it) on Nov. 15th. It’s the dinkiest little linear regression test you’ve seen, but words can’t express how pumped I was too see it actually model an optimal line of best fit. Here’s a 1994-quality gif of the program.

I hear you, I hear you. Ok dude, that was just a line. What else did you do.

Well, to get better at PyTorch, I followed along with a few miniprojects using this fantastic 10 hour tutorial by Aakash [could not find his last name] from FreeCodeCamp. With the aid of these tutorials, and a textbook I found online, I created a few starter miniprojects, and wrote an article to detail the mathematics on each of them.

Convolutional Neural Network for the CIFAR-10 dataset (ok, I didn’t write an article on this one)

EmpidAI - identifying birds with computer vision

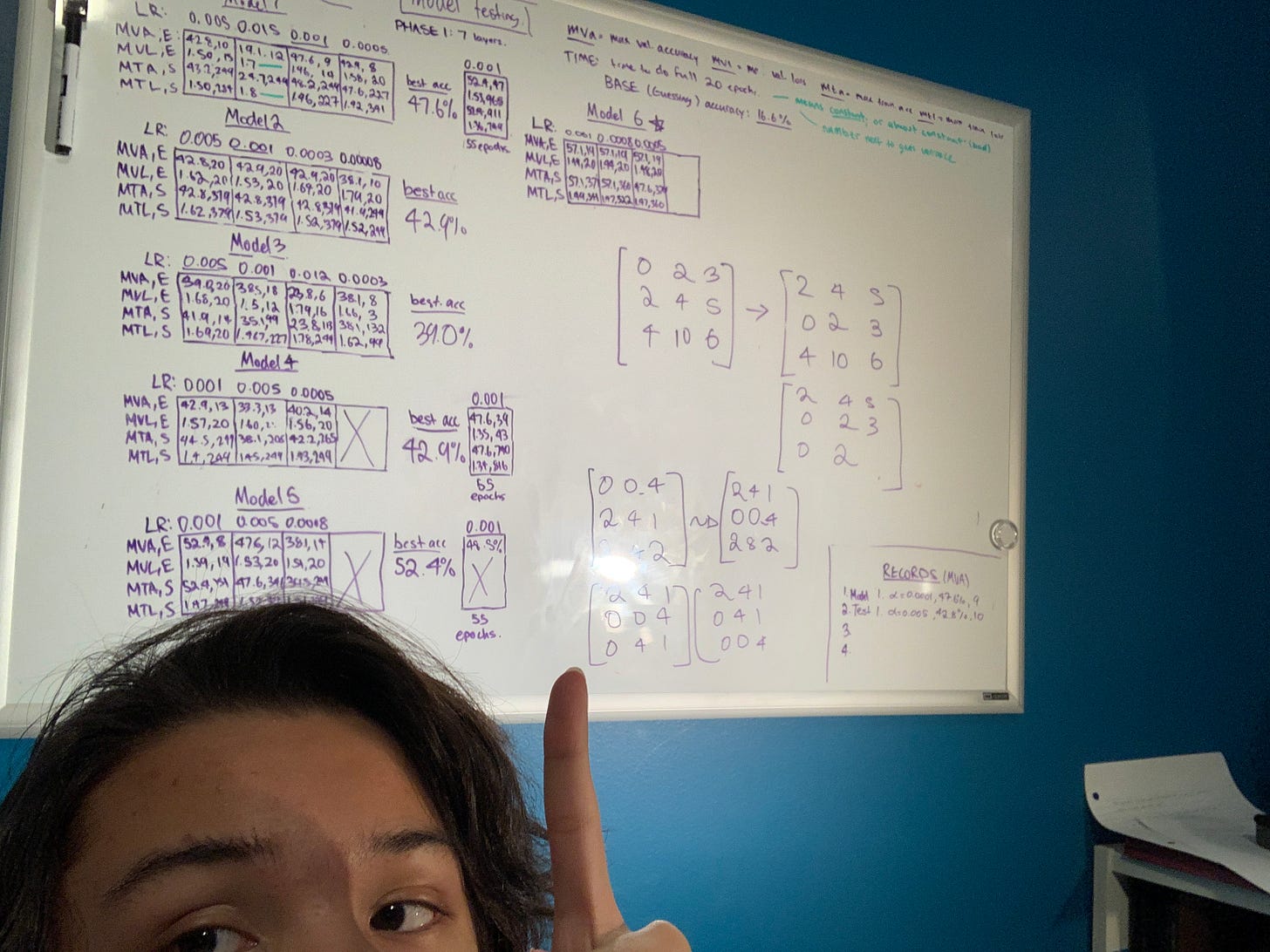

Once I was sort-of-not-really prepared to go onto my first solo project, I chose birds, because a) birds are great and b) there is no b. The specific task at hand is to create a computer vision model to differentiate from 6 species of birds that look quite remarkably similar, and occupy the same family (except for two). It’s called EmpidAI since Empidonax is the genus that most of the birds belong to. In human speak, these are known as Flycatchers.

I’m programming it, obviously, in PyTorch, and have decided (a few days into the project) that using a lightweight wrapper would be helpful - I’m using Pytorch Lightning mostly for the reason that I can easier connect to Google Colab’s TPUs (basically these processers that make things run fast).

That’s about it on that project for now - I’m nearing the end-stages (I think), but I’ll save the details for an article later on. What I can say is that I’m on the model-training phase right now, and it is a lot of waiting.

Okay. What next, computer boy?

Learning linear algebra (for real this time)

I did a couple weeks of low-effort linear algebra research back in october, but after having a come-to-jesus moment (what does that even mean), I’d have to go into it anew and do it rigorously. I’m not complaining since linear algebra is such a fundamentally beautiful topic - I’m stunned, over and over again, about how you can look at a matrix in so many ways.

FYI, I’m following along with (the amazing) Gilbert Strang’s famous 18.06 Linear Algebra lecture series from MIT OCW, but have also been able to get my hands on his textbook, Linear Algebra and It’s Applications (4th edition) which nicely works with the lectures. The lectures are pretty lowkey to watch, but the questions are quite difficult, so watch out if you’re doing that. yeah.

Learning C++

I’ve been learning C++ on the side, mostly to get the idea of a lower level programming language, but also I think it’s good to know more than just one language. Warning that I’m not really great at it, like at all! I made tic tac toe, so I’m proud of that achievement for me. I’m not really sure if I love or hate programming yet, but it sure is fun, sometimes, like 25% of the time!

TKS/501cThree

Had the privilege to be working with an amazing (and tolerant!) team on a business proposal competition for 501cThree (through tks, a STEM program) a charity devoted to bringing clean water to cities plagued by crumbling infrastructure and lead.

We’ll be pitching to them sometime in early January. I’ll be presenting, which spells certain doom for our group, jk, i hope, haha. If you’re reading this, group members, I am extremely confident in my speaking abilities. ;).

Writing

I write to understand things. (wow, what an insanely dorky sentence). I wrote a few articles this month on a variety of subjects, some of them quick, and short (most of them not though), all with equally snobby and ridiculous not-concise names.

The Math of Gradient Descent With Univariate Linear Regression

Gradient Descent Update Rule for Multiclass Linear Regression (mathy)

Using Span and Linear Combinations to understand Matrix Non-invertibility*

* award for worst article title, possibly ever

The Little Things

Working on art for the prototype of an educational mobile game for bird conservation, Find the Birds.

Continuing work with Sustainabiliteens on climate stuff.

Reading Man’s Search for Meaning by Viktor E. Frankl.

Found out how to type backticks `wow`!

Got a standing desk AND a whiteboard (yesyesyesyes)

Thanks for tolerating the rambling folks, it means a lot. Have a good one.

Oh shoot, almost forgot shameless self promo and snobby third-person tagline that I actually (surprise) wrote myself.

Adam Dhalla is a high school student out of Vancouver, British Columbia. He is fascinated with the outdoor world, and is currently learning about emerging technologies for an environmental purpose.

To keep up, Follow his Instagram, and his LinkedIn. For more, similar content, subscribe to his newsletter here. Also email me (adamdhalla@protonmail.com) if you want that.